norse.torch.module.encode module¶

Stateful encoders as torch modules.

- class norse.torch.module.encode.ConstantCurrentLIFEncoder(seq_length, p=LIFParameters(tau_syn_inv=tensor(200.), tau_mem_inv=tensor(100.), v_leak=tensor(0.), v_th=tensor(1.), v_reset=tensor(0.), method='super', alpha=tensor(100.)), dt=0.001)[source]¶

Bases:

torch.nn.modules.module.ModuleEncodes input currents as fixed (constant) voltage currents, and simulates the spikes that occur during a number of timesteps/iterations (seq_length).

Example

>>> data = torch.as_tensor([2, 4, 8, 16]) >>> seq_length = 2 # Simulate two iterations >>> constant_current_lif_encode(data, seq_length) (tensor([[0.2000, 0.4000, 0.8000, 0.0000], # State in terms of membrane voltage [0.3800, 0.7600, 0.0000, 0.0000]]), tensor([[0., 0., 0., 1.], # Spikes for each iteration [0., 0., 1., 1.]]))

- Parameters

seq_length (int) – The number of iterations to simulate

p (LIFParameters) – Initial neuron parameters.

dt (float) – Time delta between simulation steps

- forward(input_currents)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- class norse.torch.module.encode.PoissonEncoder(seq_length, f_max=100, dt=0.001)[source]¶

Bases:

torch.nn.modules.module.ModuleEncodes a tensor of input values, which are assumed to be in the range [0,1] into a tensor of one dimension higher of binary values, which represent input spikes.

- Parameters

- forward(x)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- class norse.torch.module.encode.PoissonEncoderStep(f_max=1000, dt=0.001)[source]¶

Bases:

torch.nn.modules.module.ModuleEncodes a tensor of input values, which are assumed to be in the range [0,1] into a tensor of binary values, which represent input spikes.

- Parameters

- forward(x)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- class norse.torch.module.encode.PopulationEncoder(out_features, scale=None, kernel=<function gaussian_rbf>, distance_function=<function euclidean_distance>)[source]¶

Bases:

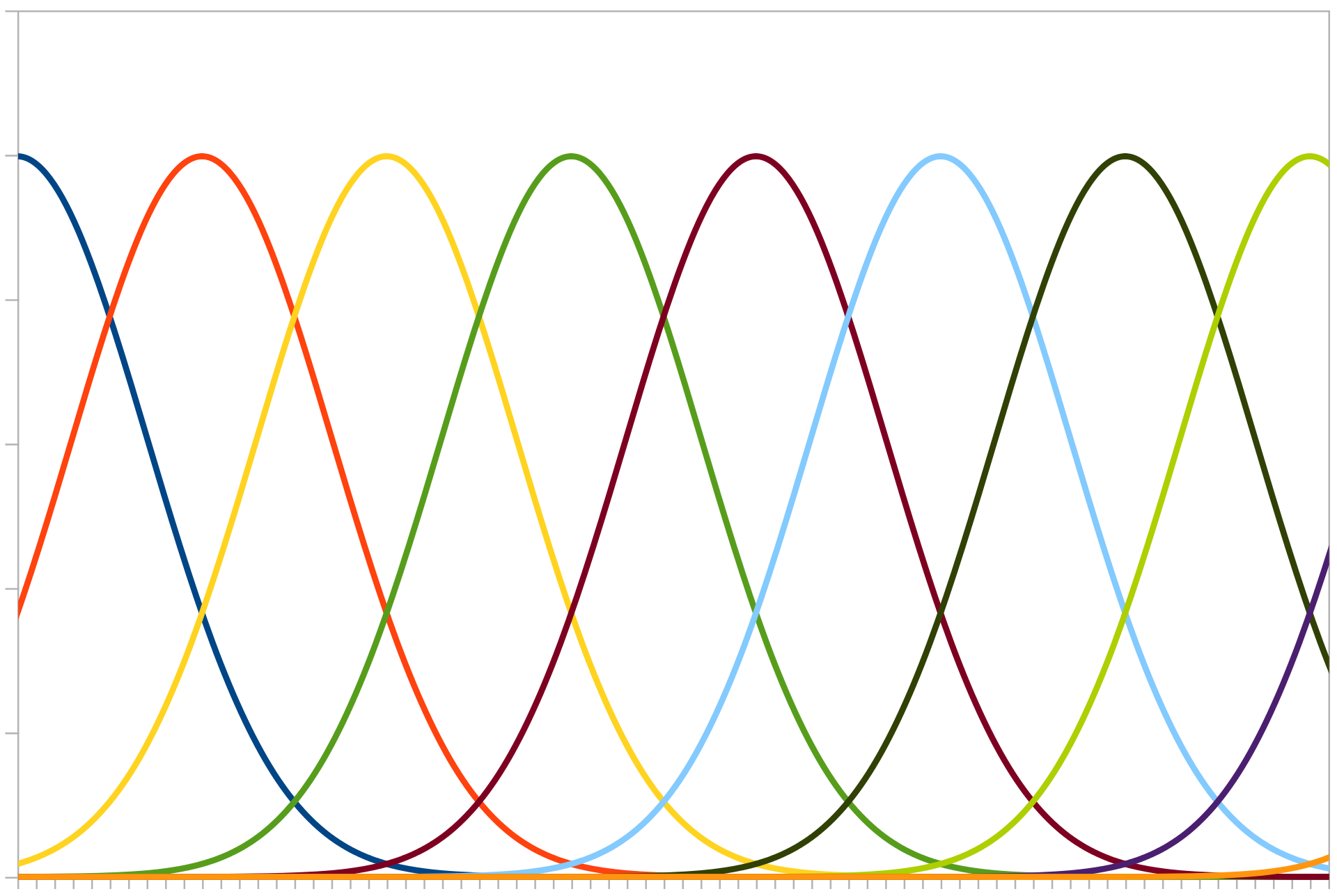

torch.nn.modules.module.ModuleEncodes a set of input values into population codes, such that each singular input value is represented by a list of numbers (typically calculated by a radial basis kernel), whose length is equal to the out_features.

Population encoding can be visualised by imagining a number of neurons in a list, whose activity increases if a number gets close to its “receptive field”.

Gaussian curves representing different neuron “receptive fields”. Image credit: Andrew K. Richardson.¶

super(PopulationEncoder, self).__init__()mons.wikimedia.org/wiki/File:PopulationCode.svg

Example

>>> data = torch.as_tensor([0, 0.5, 1]) >>> out_features = 3 >>> PopulationEncoder(out_features).forward(data) tensor([[1.0000, 0.8825, 0.6065], [0.8825, 1.0000, 0.8825], [0.6065, 0.8825, 1.0000]])

- Parameters

out_features (int) – The number of output per input value

scale (torch.Tensor) – The scaling factor for the kernels. Defaults to the maximum value of the input. Can also be set for each individual sample.

kernel (

Callable[[Tensor],Tensor]) – A function that takes two inputs and returns a tensor. The two inputs represent the center value (which changes for each index in the output tensor) and the actual data value to encode respectively.z Defaults to gaussian radial basis kernel function.distance_function (

Callable[[Tensor,Tensor],Tensor]) – A function that calculates the distance between two numbers. Defaults to euclidean.

- forward(input_tensor)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- class norse.torch.module.encode.SignedPoissonEncoder(seq_length, f_max=100, dt=0.001)[source]¶

Bases:

torch.nn.modules.module.ModuleEncodes a tensor of input values, which are assumed to be in the range [-1,1] into a tensor of one dimension higher of values in {-1,0,1}, which represent signed input spikes.

- Parameters

- forward(x)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- class norse.torch.module.encode.SignedPoissonEncoderStep(f_max=1000, dt=0.001)[source]¶

Bases:

torch.nn.modules.module.ModuleEncodes a tensor of input values, which are assumed to be in the range [-1,1] into a tensor of values in {-1,0,1}, which represent signed input spikes.

- Parameters

- forward(x)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- class norse.torch.module.encode.SpikeLatencyEncoder[source]¶

Bases:

torch.nn.modules.module.ModuleFor all neurons, remove all but the first spike. This encoding basically measures the time it takes for a neuron to spike first. Assuming that the inputs are constant, this makes sense in that strong inputs spikes fast.

Spikes are identified by their unique position in the input array.

Example

>>> data = torch.as_tensor([[0, 1, 1], [1, 1, 1]]) >>> encoder = torch.nn.Sequential( ConstantCurrentLIFEncoder() SpikeLatencyEncoder() ) >>> encoder(data) tensor([[0, 1, 1], [1, 0, 0]])

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- forward(input_spikes)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- class norse.torch.module.encode.SpikeLatencyLIFEncoder(seq_length, p=LIFParameters(tau_syn_inv=tensor(200.), tau_mem_inv=tensor(100.), v_leak=tensor(0.), v_th=tensor(1.), v_reset=tensor(0.), method='super', alpha=tensor(100.)), dt=0.001)[source]¶

Bases:

torch.nn.modules.module.ModuleEncodes an input value by the time the first spike occurs. Similar to the ConstantCurrentLIFEncoder, but the LIF can be thought to have an infinite refractory period.

- Parameters

sequence_length (int) – Number of time steps in the resulting spike train.

p (LIFParameters) – Parameters of the LIF neuron model.

dt (float) – Integration time step (should coincide with the integration time step used in the model)

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- forward(input_current)[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.